Reward shaping

1. [80%]Policy invariance under reward transformations: theory and application to reward shaping

Andrew Ng et al., ICML 1999

-

Reward shaping WILL NOT change the optimal policy! 不改变Policy

-

Reward shaping helps to converge to the optimal policy faster. 只是帮助更快的向optimal policy收敛

-

What if the results of with/without reward shaping different? There are multiple optimal policies in the question. Reward shaping helps to converge to one faster than others. The output policies are equivalent.

1.1. How to shape the shape the reward

, is difference of potentials , is real-valued function - Equation (4)

suggests that might be a good shaping potential

1.2. How to define

- E.g.:

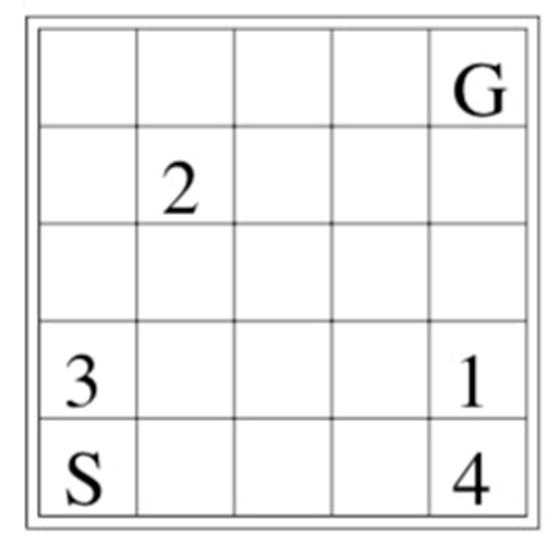

- In a grid world problem, Manhattan distance might be a good choice

- Understanding: human being tells the agent that moving in the directions that toward the goal may lead to the end state faster. By providing such a potential function, the agent has less chance to search directions that against human suggestion. Therefore, the agent reach the goal faster. 通过提供potential function,向agent提供一个大致的搜索方向,potential function指出的方向将会得到更高的搜索权重。

- In a grid world problem, Manhattan distance might be a good choice

1.3. Code, howto

- Use the equation

, , and . - Define your own

according to the question. - E.g., for value iteration, replace the reward by using above-mentioned equations while calculating the value table (compute the value function).

- Question: do you need to apply the

as well while extracting the policy from given value table?